The Dematerialization of the Studio: The Architect’s Toolkit in 2026

In the early decades of architectural education, the first list a student compiled was not one of books, but of physical instruments. The T-square, the set squares, the technical pens, and the translucent tracing paper were more than mere tools; they were the artifacts of a ritual. To draw a line was to commit to a physical reality. In that era, an error was not a “Ctrl+Z” away; it was a crisis of materiality that often required restarting the entire canvas. This friction was the architect’s first teacher, instilling a discipline where the mind had to see the building in its entirety before the hand dared to mark the paper.

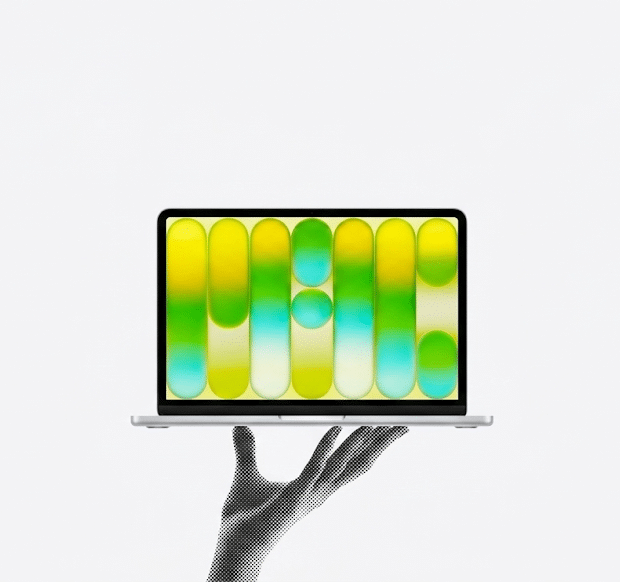

As we move through 2026, we are witnessing the final stage of a profound transformation: the total dematerialization of the architectural studio. The “Toolkit” is no longer a heavy bag of instruments or even a localized workstation. It has become a thin, digital interface connected to a global, invisible nervous system.

The Three Eras of Architectural Production

To understand where we are going, we must categorize the evolution of our tools through the lens of Compute and Authority.

| Feature | The Manual Era (Pre-1990s) | The Digital Era (1990s–2020) | The AI Cloud Era (2021–Present) |

| Primary Tool | T-Square & Ink Pens | High-End Workstation (PC) | Thin Clients & Cloud AI |

| Location of Work | The Drafting Table | The Local Hard Drive | The Distributed Cloud |

| Error Margin | Critical / Permanent | Reversible (Ctrl+Z) | Generative / Iterative |

| Status Symbol | Hand-drawing skill | GPU Power & RAM | Speed of Intent & API Access |

The Second Phase: The Era of the “Workstation”

With the turn of the millennium, the architect’s identity became inextricably linked to hardware. The rise of AutoCAD, Rhino, and 3ds Max demanded a new kind of literacy. It was no longer enough to understand spatial proportions; the architect had to understand the “Silicon.”

We lived through the era of the Workstation Master Race. The ability to produce a photorealistic render became a function of local GPU (Graphics Processing Unit) power. The architect became a part-time hardware technician, obsessed with clock speeds and cooling systems. In this phase, the “Studio” was a high-heat, high-noise environment where the machine was the factory of the idea.

2026: The Migration to the Cloud

Today, we are witnessing a fundamental decoupling. The question is no longer “How powerful is your computer?” but “How low is your latency?”

The arrival of Artificial Intelligence has moved the heavy lifting of architectural computation to the cloud. Whether it is generating 1,000 design iterations in Midjourney, analyzing structural loads in Autodesk Forma, or rendering complex scenes via D5 Render’s cloud engines, the actual “calculation” is happening on servers located thousands of miles away.

Hypothesis I: The “Window” vs. The “Factory”

In this scenario, the architect returns to a state of physical lightness. A device as thin as a MacBook Air becomes a sufficient gateway. The machine is no longer the “factory” where the building is manufactured; it is merely a “window” into a cloud-based superintelligence. This shift democratizes high-end design. A solo practitioner in a cafe now has the same computational power as a 500-person firm, provided they have the right API access.

Hypothesis II: The Survival of Local Power (The BIM Tension)

However, we must address the “BIM Paradox.” While AI is excellent at generating images and analyzing datasets, the management of massive, high-fidelity Building Information Models (BIM) still requires significant local memory.

In 2026, a Revit or ArchiCAD file for a hospital or a giga-project can exceed 10 GB of live data. The synchronization of this data over standard internet connections remains a bottleneck. For the Lead Architect managing these models, the local Workstation remains a necessity—not for rendering, but for the fluid navigation of massive data structures. We are seeing the rise of “Hybrid Computing,” where the AI generates the concept in the cloud, but the local machine “anchors” the technical reality.

Hypothesis III: The Quantum Leap and Specialized ASICs

The next frontier, which is already being discussed in Architectural Research, is the move toward specialized AI hardware—ASICs (Application-Specific Integrated Circuits) designed solely for spatial geometry and environmental simulation.

Furthermore, the theoretical arrival of Quantum Computing promises to solve optimization problems that are currently impossible. For instance, finding the absolute most efficient structural form for a 100-story tower, accounting for every wind gust and material variable, could be calculated in seconds. We can define this efficiency through a new metric, the Compute Efficiency Ratio ($\eta$):

$$\eta = \frac{\text{Decision Complexity} \times \text{Social Impact}}{\text{Local Compute Power} + \text{Cloud Latency}}$$

In the future, a high $\eta$ will define the successful architect.

What Remains Constant? The Human Decision

Despite the transition from ink to algorithms, the core of the profession remains unchanged. The tool is an amplifier of intent, not a replacement for it.

The history of the “Toolkit” is a history of removing friction.

- The Pen removed the friction of the stone chisel.

- The CAD removed the friction of the ink bottle.

- The AI is removing the friction of the “Drafting Labor.”

But the machine cannot decide what to build or why it should serve the human spirit. The decision remains a human burden. The architect of 2026 is no longer the one who draws the most lines, but the one who asks the most profound questions.

The Liquidation of Physical Assets: The Collapse of the “Hardware Tax”

In the previous decade, entering the “high-fidelity design” arena required a significant “technological tax.” Architects and small firms had to invest between $3,000 and $5,000 per seat in localized workstations to remain competitive—an upfront Capital Expenditure (CapEx) that burdened startups. However, by 2026, we are witnessing the total democratization of compute. According to recent market shifts in Virtual Desktop Infrastructure (VDI) and cloud-GPU services like NVIDIA Omniverse Cloud, the heavy lifting of architectural processing has migrated to remote servers. This transition has disrupted traditional hardware OEMs (Original Equipment Manufacturers); as local hardware sales for creators flattened in 2025, the industry shifted toward a Subscription-based Operational Expenditure (OpEx) model. This allows a $600 ultrabook to perform with the raw power of a $6,000 rig via a low-latency cloud connection. The result is a massive leap in productivity; “render downtime”—once a significant billable leak—has been virtually eliminated, allowing firms to allocate resources toward design iteration rather than hardware maintenance.

The Professional Paradigm Shift: From “Digital Labor” to “Curatorial Leadership”

This transition is not merely financial; it represents a radical restructuring of the architect’s professional and educational identity. In the era of localized workstations, a junior architect’s value was often measured by “technical endurance”—the ability to navigate complex software for 80% of their billable hours. In 2026, we are seeing the rise of the “Authority of Intent.” As AI automates the “drafting labor,” the architect is elevated to the role of a Design Curator. This shift is reflected in modern architectural curricula, which are pivoting away from teaching “production skills” toward “Curatorial Leadership” and “Algorithmic Ethics.” According to the RIBA Future of the Profession 2025/26 report, the most sought-after skill is no longer software proficiency, but the ability to direct AI agents and synthesize cloud-generated outputs into a coherent human and cultural context. We are witnessing the birth of the “Strategic Architect”—a professional who manages intelligent systems as an orchestral conductor rather than a digital laborer chained to a high-cost machine.

Conclusion: The Architecture of Clear Vision

The evolution from the drafting table to the cloud is a process of Cognitive Liberation. By offloading the mechanical tasks—the hatching, the dimensioning, the rendering—to the cloud, the architect is forced back into the role of the master builder and the philosopher.

The toolkit of the future is not a device you buy at a store; it is a system you curate in the cloud. But the most important tool remains the one that cannot be upgraded, automated, or dematerialized: The Human Vision. As we look at the latest News in 2026, the architects who matter are not those with the fastest machines, but those with the clearest understanding of how their buildings will change the lives of the people who inhabit them.

✦ ArchUp Editorial Insight

What this article frames as technological liberation is more precisely the outcome of a structural shift in how software capital extracts value from professional labor. The migration from localized workstations to subscription-based cloud infrastructure does not democratize design — it converts a one-time capital expenditure into a permanent operational dependency on a small number of platform providers. The architect who once owned their tools now leases access to them, and the conditions of that lease are set by Autodesk, NVIDIA, and their cloud infrastructure partners. The celebrated disappearance of the “hardware tax” simultaneously installs an invisible subscription tax, one that scales with the firm’s ambition and can be repriced unilaterally. What emerges architecturally is not cognitive liberation but cognitive outsourcing — a profession increasingly shaped by the decision boundaries that platform vendors encode into their generative systems, long before the architect poses the first question.